Intelligent OCR & Computer Vision Pipeline

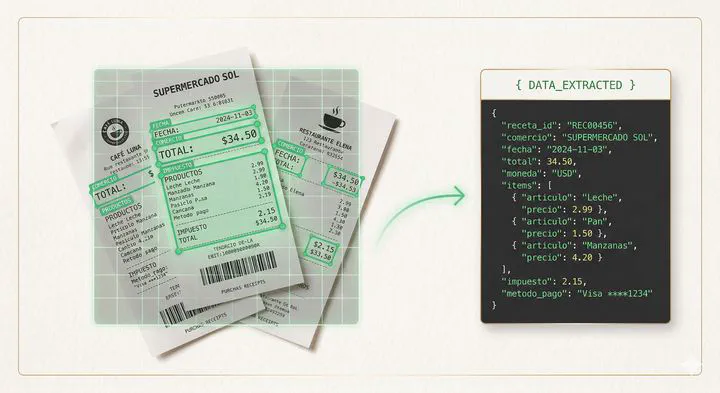

A serverless Azure Function that uses LLM vision models to extract, categorize, and normalize data from customer receipt images — replacing manual extraction with 85–90% accuracy and reducing analyst workload by 70%.

Project Overview

The company needed to validate customer receipts (tickets) from various merchants as part of its credit-line evaluation process. The challenge was that every ticket has a different structure — different layouts, fonts, fields, and formats — making traditional OCR or rule-based extraction unreliable. Analysts were extracting information manually, which was slow, error-prone, and couldn’t scale.

With the emergence of LLM vision capabilities, the project leveraged Azure OpenAI to intelligently read receipt images, categorize the merchant, extract the relevant fields, and normalize the output into structured JSON — all within a single serverless function call.

The structured output is then forwarded to an external credit-line calculator and persisted in CosmosDB as part of the customer’s evaluation profile.

Architecture

High-Level Flow

Component Breakdown

- Internal Services — The client-facing applications send up to 3 receipt images per request along with the customer context to trigger the extraction process.

- Azure Function — The core serverless compute unit that orchestrates the entire flow: receives images, calls the vision model, processes results, forwards to the calculator, assembles the customer summary, and persists everything.

- Azure OpenAI (Vision Model) — Processes each receipt image and returns structured data. The model handles merchant identification, field extraction, and data normalization in a single inference call. Hosted on Azure to ensure customer data confidentiality never leaves the company’s cloud boundary.

- External Credit-Line Calculator — A separate service that receives the normalized ticket data and computes the credit-line recommendation.

- CosmosDB — Stores the assembled customer summary including extracted ticket data, calculator results, and metadata for downstream consumption.

LLM-Powered Extraction Process

The vision model performs three tasks in a single prompt:

1. Merchant Categorization

Determines whether the ticket belongs to a valid merchant category and classifies it accordingly. Invalid or unrecognizable tickets are flagged and excluded from the credit calculation.

2. Field Extraction

Extracts key information from each receipt regardless of its layout:

- Merchant name and category

- Date and time of purchase

- Individual line items and amounts

- Total amount

- Payment method (when visible)

3. Data Normalization

The raw extracted data is normalized into a consistent JSON schema — standardizing date formats, currency values, merchant categories, and field names — so that every ticket, regardless of its original format, produces a uniform output for the calculator.

Processing Pipeline

Within the Azure Function, the flow after LLM extraction follows a straightforward pipeline:

| Step | Action |

|---|---|

| 1. Receive | Accept up to 3 ticket images from the requesting service |

| 2. Extract | Send each image to Azure OpenAI vision model, receive structured JSON per ticket |

| 3. Validate | Verify extracted fields for completeness and merchant validity |

| 4. Calculate | Forward normalized data to the external credit-line calculator |

| 5. Assemble | Build a customer summary combining ticket data, calculator output, and metadata |

| 6. Persist | Save the customer summary to CosmosDB |

| 7. Respond | Return the final result to the calling service |

Validation & Accuracy

To ensure the model was performing reliably before full production rollout, a manual validation process was conducted:

- The call center team was asked to manually extract the same receipt information using their existing process.

- The manual extractions were compared against the LLM-generated outputs to measure accuracy across categories, amounts, dates, and merchant identification.

- This parallel validation confirmed the model’s production readiness.

Production Results

| Metric | Result |

|---|---|

| Content & category accuracy | 85–90% |

| Workload reduction | ~70% less manual extraction effort |

| Processing time | Near real-time (seconds per request vs. minutes manually) |

Tech Stack

| Layer | Technology |

|---|---|

| Compute | Azure Functions (Serverless) |

| AI / Vision | Azure OpenAI (GPT Vision Model) |

| Storage | Azure CosmosDB |

| Integration | External Credit-Line Calculator API |

| Language | Python |

Conclusion

This project proved that LLM vision models can effectively replace manual data extraction from unstructured document images, even when formats vary significantly across sources. By keeping the architecture simple — a single Azure Function orchestrating the vision model and downstream services — the solution achieved fast deployment, low operational cost, and a measurable 70% reduction in analyst workload. The 85–90% accuracy rate validated the approach for production use, with the parallel manual validation providing confidence in the model’s reliability.